Intelligent Control and Manufacturing in the Agentic Era

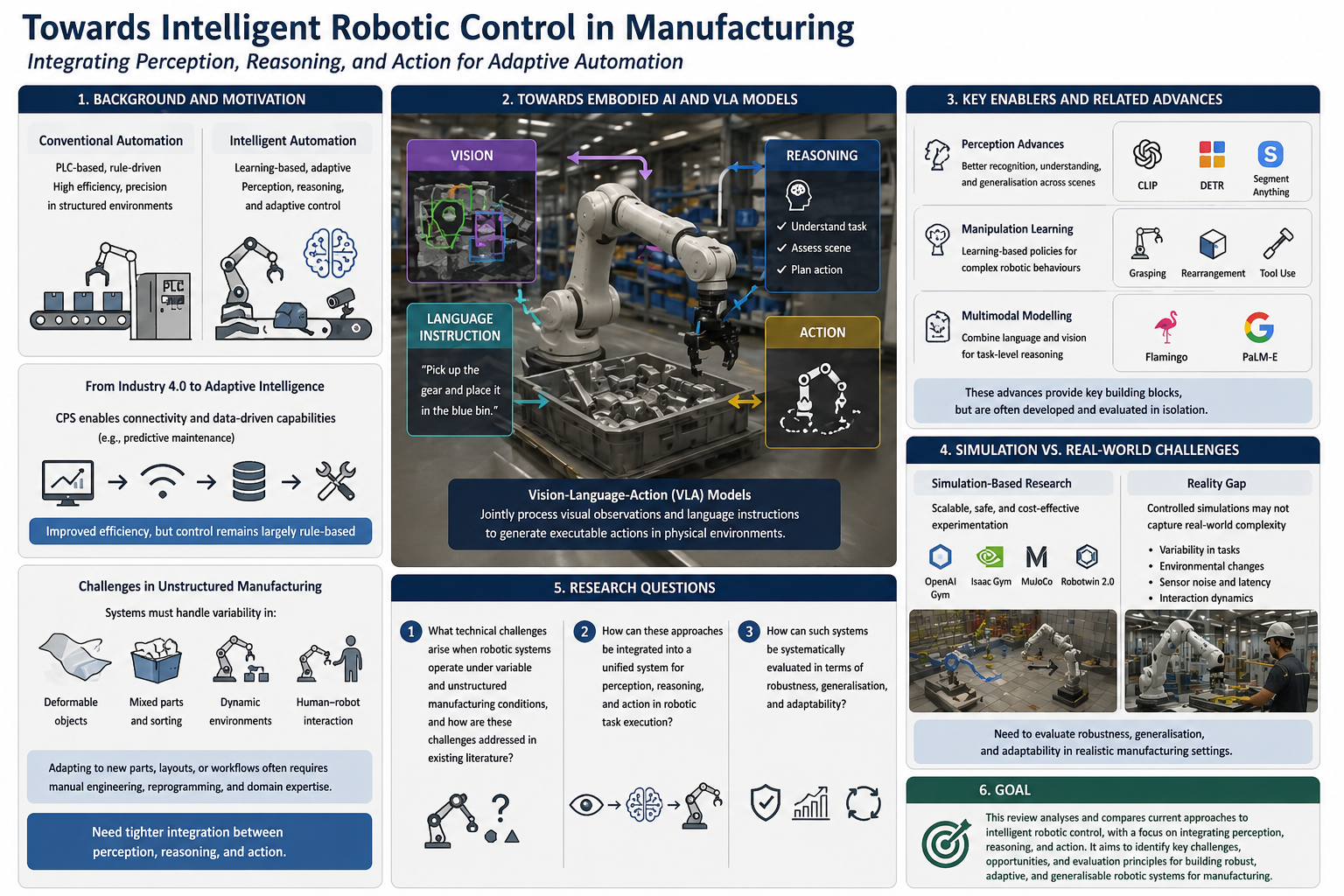

The rapid advancement of artificial intelligence (AI) is increasingly reshaping modern manufacturing systems, particularly in intelligent automation and robotics. In this context, intelligent automation refers to systems that incorporate learning-based methods to support perception, decision-making, and adaptive control, whereas conventional industrial automation systems are primarily based on programmable logic controllers (PLCs), predefined workflows, and rule-based execution pipelines, which enable high levels of efficiency, precision, and repeatability in structured production environments. More recently, the development of Industry 4.0 and cyber-physical systems (CPS) has enhanced connectivity and data-driven capabilities in manufacturing; for example, CPS-enabled production lines can continuously monitor machine states and predict potential failures, allowing for predictive maintenance and improved operational efficiency. However, despite these improvements, the underlying control paradigm in many systems remains largely rule-based and task-specific. This limitation becomes more apparent in less structured manufacturing scenarios, such as handling deformable objects, sorting mixed parts, or operating in shared human-robot workspaces, where systems must respond to variations in object properties, scene configurations, and task conditions.

In such settings, industrial automation systems are often realised through robotic platforms, which are required to perceive their surroundings, reason about task conditions, and adapt their actions accordingly. Tasks such as material handling, part sorting, assembly, and tool interaction, therefore, require continuous perception and decision-making rather than fixed execution. However, existing industrial systems often struggle under such conditions because they are typically designed under fixed assumptions about object geometry, task sequences, and environmental stability. As a result, adapting these systems to new parts, layouts, or workflows frequently requires manual engineering, reprogramming, and domain expertise. These challenges suggest that improving intelligent robotic behaviour in manufacturing requires not only more accurate perception or stronger control, but also tighter integration between perception, reasoning, and action.

Recent advances in embodied artificial intelligence have explored more integrated approaches to robotic control. Embodied artificial intelligence refers to systems that learn to act in physical environments by linking perception, reasoning, and action through interaction with the world. Within this context, vision-language-action (VLA) models have emerged as a promising direction for integrated robotic decision-making. VLA models jointly process visual observations (e.g., images of the environment) and language instructions (e.g., task descriptions) in order to generate executable actions, thereby connecting perception, semantic reasoning, and control within a unified framework. Related work such as Do As I Can, Not As I Say, Code as Policies, and Inner Monologue further shows that language can help specify task goals, guide planning, and support decision-making in robotic systems. In this context, embodied reasoning refers to the ability of a system to make decisions based on both its sensory inputs and its interaction with the environment, rather than relying solely on predefined rules or abstract representations. Compared with conventional modular systems, these approaches may provide greater flexibility in handling high-level instructions, task variation, and open-ended environments.

At the same time, the development of VLA models builds upon advances in several related research areas, including computer vision, manipulation learning, and multimodal modelling. In perception, models such as CLIP, DETR, and Segment Anything have significantly improved the ability of machines to recognise objects, understand scene structure, and generalise across visual inputs. In manipulation, reinforcement learning and learning-based policy methods have enabled increasingly capable robotic behaviours for tasks such as grasping, rearrangement, and tool use. In multimodal modelling, systems such as Flamingo and PaLM-E demonstrate how combining language and visual representations can enhance task-level reasoning and generalisation. Together, these advances provide key building blocks for more integrated robotic systems. However, many existing approaches are still developed and evaluated as separate components, which may limit their effectiveness when applied to real robotic systems operating in closed-loop environments.

A further challenge is that much of the current literature remains centred on controlled benchmarks, simulated environments, or narrowly defined task settings. Simulation platforms such as OpenAI Gym, Isaac Gym, and MuJoCo, as well as sim-to-real techniques such as domain randomisation, have played an important role in accelerating research progress and enabling scalable experimentation. More recent systems, such as Robotwin 2.0, also aim to improve robustness through increased simulation diversity. However, these approaches are often evaluated in controlled or simplified environments, which may not fully capture the complexity of real-world manufacturing scenarios. As a result, while simulation-based methods are effective for developing and testing models, there remains a need to better understand how such systems behave under more realistic conditions, including variability in tasks, environments, and interaction dynamics.

Against this background, this literature review examines current research in intelligent robotic control for manufacturing, with a focus on how different approaches address the integration of perception, reasoning, and action in robotic systems. Rather than only summarising representative work, the review compares and analyses existing methods in terms of their assumptions, capabilities, and limitations. In particular, this study is guided by three research questions: 1. What technical challenges arise when robotic systems operate under variable and unstructured manufacturing conditions, and how are these challenges addressed in existing literature? 2. How can these approaches be integrated into a unified system for perception, reasoning, and action in robotic task execution? 3. How can such systems be systematically evaluated in terms of robustness, generalisation, and adaptability?